HANA 2.0 SPS 02 is now available and there have been a number of important updates to the predictive analysis library (PAL). The focus is on ease-of-use rather than introducing a bunch of new algorithms (though there are a couple).

In this blog I’ll introduce the updates and show you where to find hands-on tutorial videos:

◉ Type-any procedures

◉ Web-based modeler

◉ New algorithms

◉ Enhanced algorithms

Type-any procedures

If you’ve worked with the PAL in the past you’ll be familiar with the “wrapper generator”. This stored procedure is used to generate stored procedures specific to the data structures of your particular scenario and specified explicitly with table types. This is partly because SQL Script is a static-type language so it’s difficult to overload a single procedure with multiple options. Whenever the data structures change because your scenario has evolved or indeed the PAL capabilities themselves have evolved it’s often necessary to re-create the procedure.

The all-new Type-any syntax removes the need for all that by providing a library of ready built procedures that you can call at will. No wrapper generator or table types required. So less development work for you, less maintenance, and more succinct code. A simple example might look like this:

-- parameters

CREATE LOCAL TEMPORARY COLUMN TABLE "#PARAMS" ("NAME" VARCHAR(60), "INTARGS" INTEGER, "DOUBLEARGS" DOUBLE, "STRINGARGS" VARCHAR(100));

INSERT INTO "#PARAMS" VALUES ('THREAD_RATIO', null, 1.0, null); -- Between 0 and 1

INSERT INTO "#PARAMS" VALUES ('FACTOR_NUMBER', 2, null, null); -- Number of factors

-- call : results inline

CALL "_SYS_AFL"."PAL_FACTOR_ANALYSIS" ("MYSCHEMA"."MYDATA", "#PARAMS", ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?);

So you just need to bring the input data and set any run-time parameters and you can call the Type-any procedure directly. Notice that the procedure is located in the _SYS_AFL schema and is prefixed PAL_. It’s pretty much the same for all algorithms. Unfortunately the real-time state-enabled scoring didn’t quite make it – but we can always hope this will be available via Type-any syntax at some point in the future.

I don’t know about you but that’s how I envisioned calling a PAL algorithm way way back when it was first introduced (HANA 1.0 SPS 04 anyone)? Better late than never I suppose!

Web-based modeler

Despite making access to the PAL via code a piece of cake (see above) some folks prefer not to need to write any code at all. Hey, I’m one of those!

Well HANA provided the application function modeler (AFM) in HANA 1.0 SPS 06 delivering the functionality via the flowgraph editor in HANA Studio (or in Eclipse with the HANA tools installed). However we’ve never had a web-based version – even though XS Advanced and Web IDE for HANA do support the flowgraph editor.

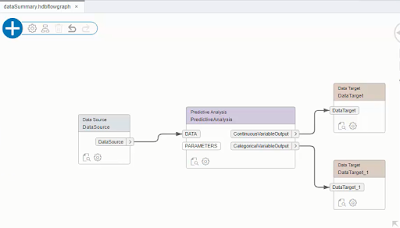

Well not anymore!!! With SPS 02 the web-based flowgraph editor now includes a Predictive Analysis node. You can now combine all the ETL stuff you know and love (hey we know that’s often 80% of the job with predictive) and fully integrate that with PAL functions. So your flowgraph might look like this:

In this blog I’ll introduce the updates and show you where to find hands-on tutorial videos:

◉ Type-any procedures

◉ Web-based modeler

◉ New algorithms

◉ Enhanced algorithms

Type-any procedures

If you’ve worked with the PAL in the past you’ll be familiar with the “wrapper generator”. This stored procedure is used to generate stored procedures specific to the data structures of your particular scenario and specified explicitly with table types. This is partly because SQL Script is a static-type language so it’s difficult to overload a single procedure with multiple options. Whenever the data structures change because your scenario has evolved or indeed the PAL capabilities themselves have evolved it’s often necessary to re-create the procedure.

The all-new Type-any syntax removes the need for all that by providing a library of ready built procedures that you can call at will. No wrapper generator or table types required. So less development work for you, less maintenance, and more succinct code. A simple example might look like this:

-- parameters

CREATE LOCAL TEMPORARY COLUMN TABLE "#PARAMS" ("NAME" VARCHAR(60), "INTARGS" INTEGER, "DOUBLEARGS" DOUBLE, "STRINGARGS" VARCHAR(100));

INSERT INTO "#PARAMS" VALUES ('THREAD_RATIO', null, 1.0, null); -- Between 0 and 1

INSERT INTO "#PARAMS" VALUES ('FACTOR_NUMBER', 2, null, null); -- Number of factors

-- call : results inline

CALL "_SYS_AFL"."PAL_FACTOR_ANALYSIS" ("MYSCHEMA"."MYDATA", "#PARAMS", ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?);

So you just need to bring the input data and set any run-time parameters and you can call the Type-any procedure directly. Notice that the procedure is located in the _SYS_AFL schema and is prefixed PAL_. It’s pretty much the same for all algorithms. Unfortunately the real-time state-enabled scoring didn’t quite make it – but we can always hope this will be available via Type-any syntax at some point in the future.

I don’t know about you but that’s how I envisioned calling a PAL algorithm way way back when it was first introduced (HANA 1.0 SPS 04 anyone)? Better late than never I suppose!

Web-based modeler

Despite making access to the PAL via code a piece of cake (see above) some folks prefer not to need to write any code at all. Hey, I’m one of those!

Well HANA provided the application function modeler (AFM) in HANA 1.0 SPS 06 delivering the functionality via the flowgraph editor in HANA Studio (or in Eclipse with the HANA tools installed). However we’ve never had a web-based version – even though XS Advanced and Web IDE for HANA do support the flowgraph editor.

Well not anymore!!! With SPS 02 the web-based flowgraph editor now includes a Predictive Analysis node. You can now combine all the ETL stuff you know and love (hey we know that’s often 80% of the job with predictive) and fully integrate that with PAL functions. So your flowgraph might look like this:

OK that’s admittedly a very simple example – but you get the idea? You can have as many Predictive Analysis nodes in your process flow as you want. When you build the flowgraph, required stored procedures and tables are generated without you having to write a single line of SQL Script. Yay!

One thing to bear in mind is that the flowgraph editor is typically about one release behind the PAL. So the PAL functions available correspond to those delivered with HANA 2.0 SPS 01 (i.e. the new algorithms introduced with SPS 02 aren’t yet available). Also you may need to jump through a few hoops to get the Predictive Analysis node to appear – but that’s all covered in the hands-on tutorials.

New algorithms

A couple of new algorithms have been introduced to help with data preparation:

Factor Analysis (did you already spot it in the Type-any example above?) allows you to identify latent variables. Imagine you have information from a survey that includes income, education, and occupation however the responses to all of these are similar as they all relate to social-economic status. The goal of Factor Analysis is to identify scenarios like this and allow you to minimize the number of necessary dimensions to be used in your model.

Multi-dimensional Scaling is similar in many ways, by allowing you to reduce the number of dimensions in a given dataset. The goal is to simplify the data so that when there are many observed dimensions that are highly similar they can be reduced into a single dimension. This also helps with visualization (how do you visualize a dataset with more than 4 dimensions?)

Enhanced algorithms

Finally, a number of algorithms have been enhanced:

Real-time state-enabled scoring has been extended to support additional PAL functions. Real-time scoring is cool as it allows you to optimize response times when repeatedly scoring from the same model such as when part of a transaction. For complex models, calling a regular scoring function involves parsing the model which can far outweigh the time to actually do the scoring itself. However state-enabled real-time scoring allows you to store the parsed model in-memory ready for ultra-fast execution as and when new transactions arrive. The newly supported functions are LDA Inference, NBC, BPNN, decision trees, PCA projection, cluster assignment, binning assignment, LDA project, LDA CLASSIFY, and Posterior Scaling. I’ve included an example using decision trees in the tutorial videos.

There are some generic parameters worthy of note:

- THREAD_RATIO is a new parameter allowing you to optimize the use of multi-threading based of a percentage of available threads

- HAS_ID allows you to specify whether the dataset does/ does not have an ID in the first column

- CATEGORICAL_VARIABLE and DEPENDENT_VARIABLE allow you to consistently specify categorical and dependent variables irrespective of PAL function

No comments:

Post a Comment